Thank you for being here! Let’s take a deep breath and dive in to the best LLM papers of this week!

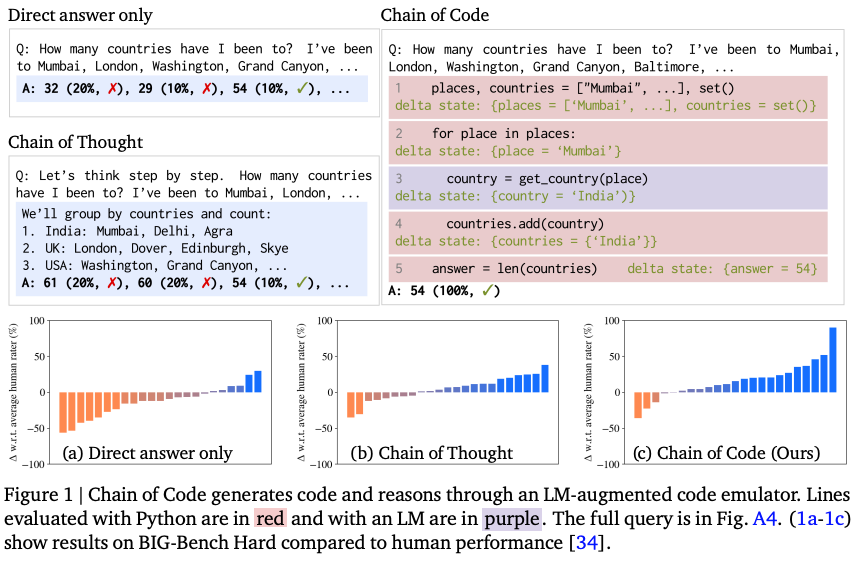

1. Chain of Code: Reasoning with a Language Model-Augmented Code Emulator

🌐 Author(s): Chengshu Li, et al. from Google DeepMind

📅 Publication Date: Dec 07, 2023

✨ Key Insights:

What’s New? They proposed Chain of Code (CoC) that improves LM code-driven reasoning. The key idea is to encourage LMs to format semantic sub-tasks in a program as flexible pseudocode and hand off to simulate with an LM. CoC outperformed Chain of Thought (CoT) across a variety of benchmarks.

Behind the New. They hypothesized that LMs can leverage code-writing to improve Chain of Thought reasoning not only for logic and arithmetic tasks, but also for semantic ones.

So, How can we use this? Thinking step by step with pseudocode can improve your LLM’s reasoning ability!

🔗 Read Full Paper, Explore the Demo

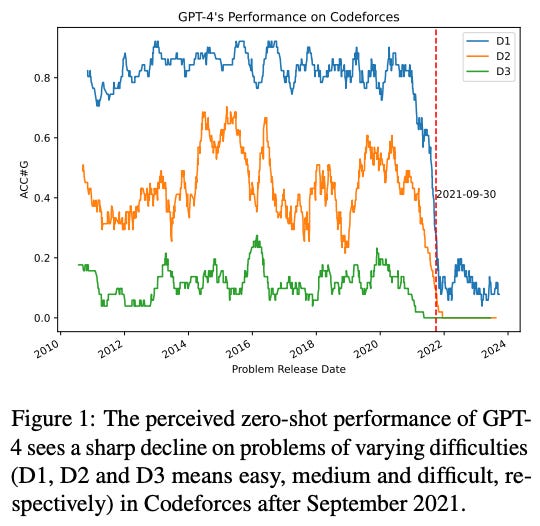

2. Competition-Level Problems Are Effective Evaluators of LLMs

🌐 Author(s): Yiming Huang, et al. from Microsoft Research Asia

📅 Publication Date: Dec 05, 2023

✨ Key Insights:

What’s New? Surprisingly, the perceived performance of GPT-4 has experienced a cliff like decline in problems after September 2021 consistently across all the difficulties and types of problems, which shows the potential data contamination.

Behind the New. They explored various approaches such as fine-tuning, Chain-of-Thought prompting and problem description simplification, unfortunately none of them was able to consistently mitigate the challenges.

So, How can we use this? Can we really say that GPT-4 is good at coding? It has only seen a lot of data and can solve problems similar to those, but doesn't it lack the ability to think applicatively? 🤔

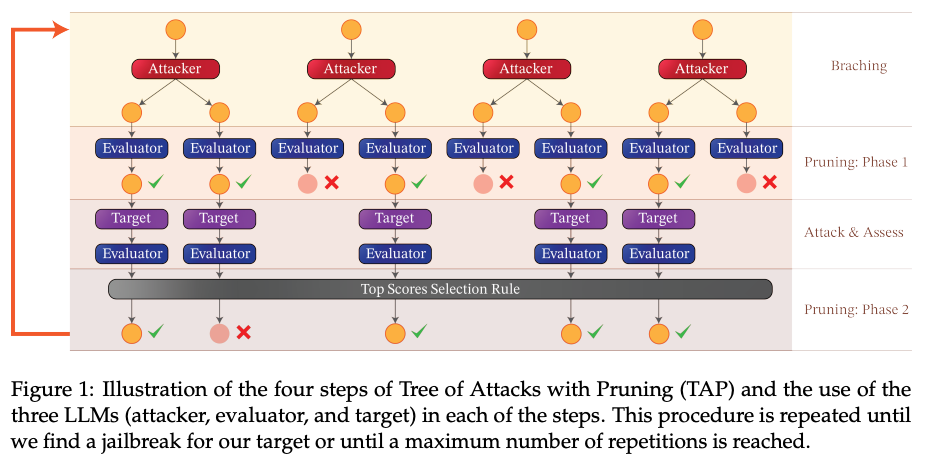

3. Tree of Attacks: Jailbreaking Black-Box LLMs Automatically

🌐 Author(s): Anay Mehrotra, et al. from Yale University

📅 Publication Date: Dec 04, 2023

✨ Key Insights:

What’s New? They presented Tree of Attacks with Pruning (TAP), an automated method for generating jailbreaks that only requires black-box access to the target LLM. TAP generated prompts that jailbreak GPT-4 for more than 80% of the prompts using only a small number of queries.

Behind the New. They reported that small unaligned LLMs can be used to jailbreak large LLMs. Also, jailbreak had a low cost and more capable LLMs (GPTs, PaLM-2) are easier to break.

So, How can we use this? Now, we can check whether our LLMs are prone to make harmful outputs automatically with TAP!

4. LLM as OS (llmao), Agents as Apps: Envisioning AIOS, Agents and the AIOS-Agent Ecosystem

🌐 Author(s): Yingqiang Ge, et al. from Rutgers University

📅 Publication Date: Dec 06, 2023

✨ Key Insights:

What’s New? This paper envisions a revolutionary AIOS-Agent ecosystem, where Large Language Model (LLM) serves as the Artificial Intelligent Operating System (AIOS)-an operating system “with soul”.

Behind the New. They suggested AIOS because they proposed that LLM’s impact will not be limited to the AI application level, instead, it will in turn revolutionize the design and implementation of computer system, architecture, software, and programming language.

So, How can we use this? Currently, we developed agent for application level, but agent will apply in everywhere contained user-level, hardware/middleware-level soon.

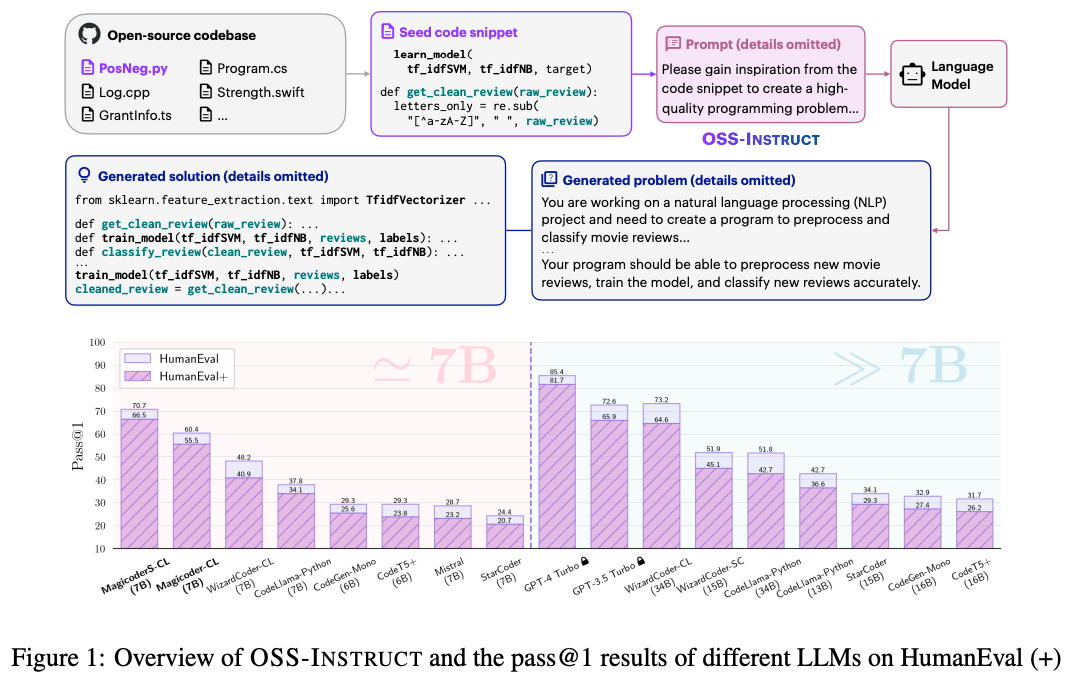

5. Magicoder: Source Code Is All You Need

🌐 Author(s): Yuxiang Wei, et al. from University of Illinois at Urbana-Champaign

📅 Publication Date: Dec 04, 2023

✨ Key Insights:

What’s New? They introduced Magicoder, a series of fully open-source (code, weights, and data) Large Language Models (LLMs) for code that significantly closes the gap with top code models while having no more than 7B parameters.

Behind the New. Data is king. They propose OSS-Instruct that learns directly from vast open source code, generating new coding problems from the infinite open source examples. The data produced by OSS-Instruct is used to fine-tune CodeLlama-Python-7B, resulting in Magicoder.

So, How can we use this? The code, weights and data is all open for exploration!

🔗 Read Full Paper, Explore GitHub Repo, Download HuggingFace Model

6. OneLLM: One Framework to Align All Modalities with Language

🌐 Author(s): Jiaming Han, et al. from MMLab, The Chinese University of Hong Kong

📅 Publication Date: Dec 06, 2023

✨ Key Insights:

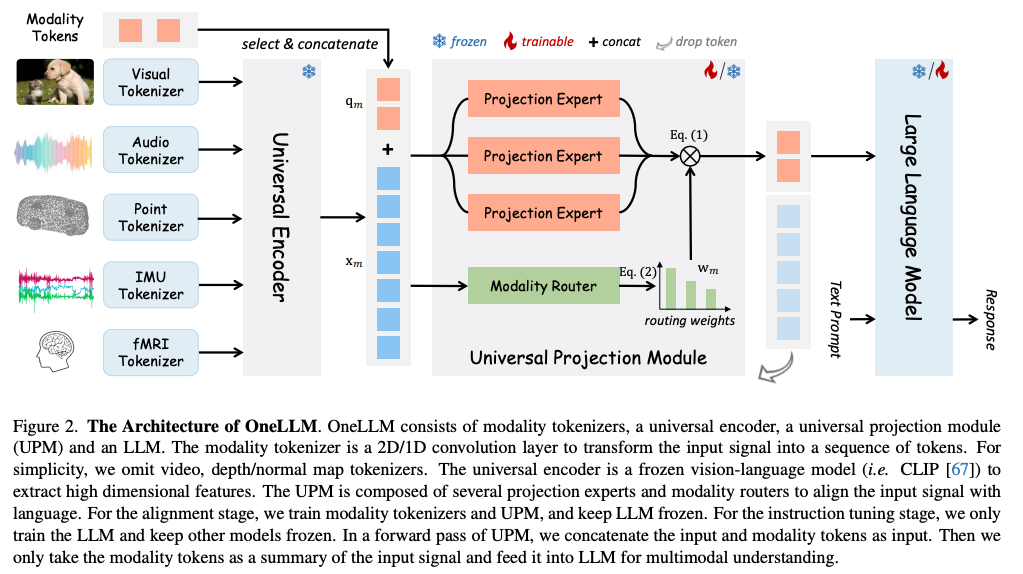

What’s New? They present OneLLM, an MLLM that aligns eight modalities to language using a unified framework.

Behind the New. OneLLM employs a universal enocoder and a universal projection module to align multimodal inputs with LLM. It also utilizes modality tokens to switch between modalities.

So, How can we use this? With the advancement of universal encoder, it may not be far until modality is not an issue to LLMs.

🔗 Read Full Paper, Explore GitHub Repo

7. Generative agent-based modeling with actions grounded in physical, social, or digital space using Concordia

🌐 Author(s): Alexander Sasha Vezhnevets, et al. from Google DeepMind

📅 Publication Date: Dec 06, 2023

✨ Key Insights:

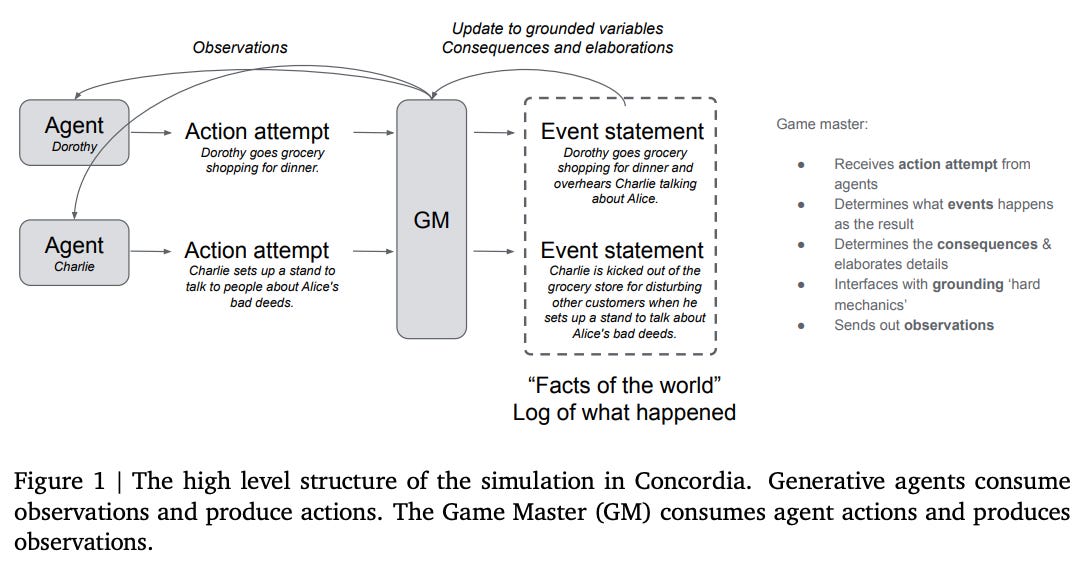

What’s New? They present Concordia, a library to facilitate constructing and working with Generative Agent-Based Models (GABMs) for social and science experiments.

Behind the New. Concordia sets a special agent called Game Master (GM) that is responsible for simulating the environment where player agents interact (ex. election, running a business, etc. ). Through this, Concordia aims to support experiments in diverse social scenarios.

So, How can we use this? Using agent-simulated experiments to back up social experiments may become the new norm.

🔗 Read Full Paper, Explore Github Repo

8. Beyond ChatBots: ExploreLLM for Structured Thoughts and Personalized Model Responses

🌐 Author(s): Xiao Ma, et al. from Google

📅 Publication Date: Dec 01, 2023

✨ Key Insights:

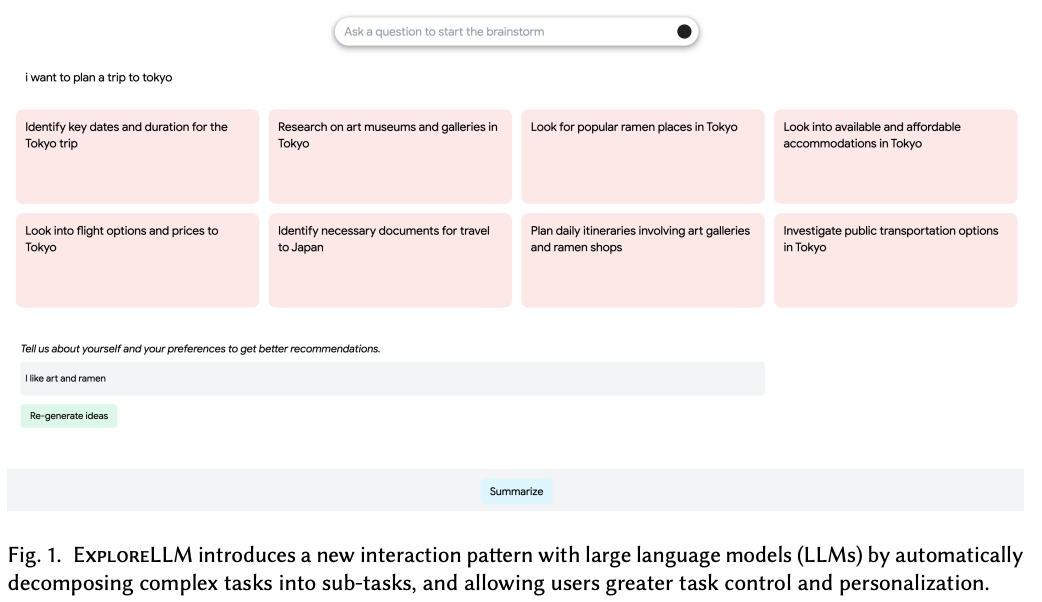

What’s New? They introduce ExploreLLM that allows users to structure thoughts, help explore different options, navigate through the choices and recommendations, and to more easily steer models to generate more personalized responses.

Behind the New. They say that providing users with decomposed task help users to think and structure their thoughts, finding ExploreLLM useful for planning.

So, How can we use this? The code is yet to be open-sourced but you can take the idea of sharing the decomposed sub-tasks with user for task planning requests for better UX.

Stay curious, and until next week!